With the new version, iOS brings a huge list of upgrades to the its extremely popular operating system.

It does not only bring in good news for end-users but also for developers to think about new features they can develop and offer to the users. But Apple took developers by surprise by doing an early release of iOS 14 with just one day notice. This didn’t sit well with the developer community. With Apple’s war with EPIC games, Spotify, and others, it needs to ensure they are showing that they are on the developer’s side. But nonetheless, the release of a new iOS is always good news for developers and users. Here are the top 5 features developers should aim for in their apps when developing for iOS 14.

App Clips

App clips can change the way users can access functionalities of your iOS 14 app. Think about making a quick payment from a wallet app, and instead of opening the app, you can just scan a QR and it pops the relevant screen in a microsecond. App clips can also help get more downloads for apps, build just one key feature in-app clip and make the QR available for access, as soon as the user accesses the QR, they get to see the feature and click on Get to download the app. An example of this can be a news app, instead of opening the whole app, the news article can open and if the user likes the content, they can download the app for full consumption. This has implications in many areas like payments, food deliveries, quick restaurant menus, etc. App clips are not limited to access via QR Codes, you can even use iMessage links, NFC Tags. Safari Banners and place cards in maps.

Widgets

For over a decade Apple users were stuck with the same icon grids spread across pages on the iPhone. With widgets, the much-needed flair will come on the home screen. This is one feature Android users have always touted in front of Apple users, but not anymore. Apple has released very easy to use and practical widgets for its end users for features like clock, weather, battery life, photos, etc. App developers can also use the iOS 14 update widgets to deliver small key pieces of content. For e.g. a stock trading app can show prices of your top shares or the current value of the portfolio on the home screen. A meditation app can show a timer for the next meditation cycle. A water reminder app can visually show the iOS 14 user on how many glasses are left to drink for the day!! The uses are endless, and all iOS 14 app developers should use these features. Widget Kit framework is very simple to use and build upon. Developers who have been familiar with other SDKs from Apple should find it quick to adapt to.

On-device powerful neural engine

With more powerful A14 and Bionic chips, Apple has ensured that it is packing a powerhouse in its devices. The devices now have dedicated neural engine cores that can be leveraged by developers. Developers right now use large cloud servers to run the ML models and resources for vision, text, and other processing tasks. But now, as a developer, you can use ML Core to leverage the power of the on-device neural engine in iOS 14 and perform all the machine learning tasks locally thus making your app highly efficient and performant.

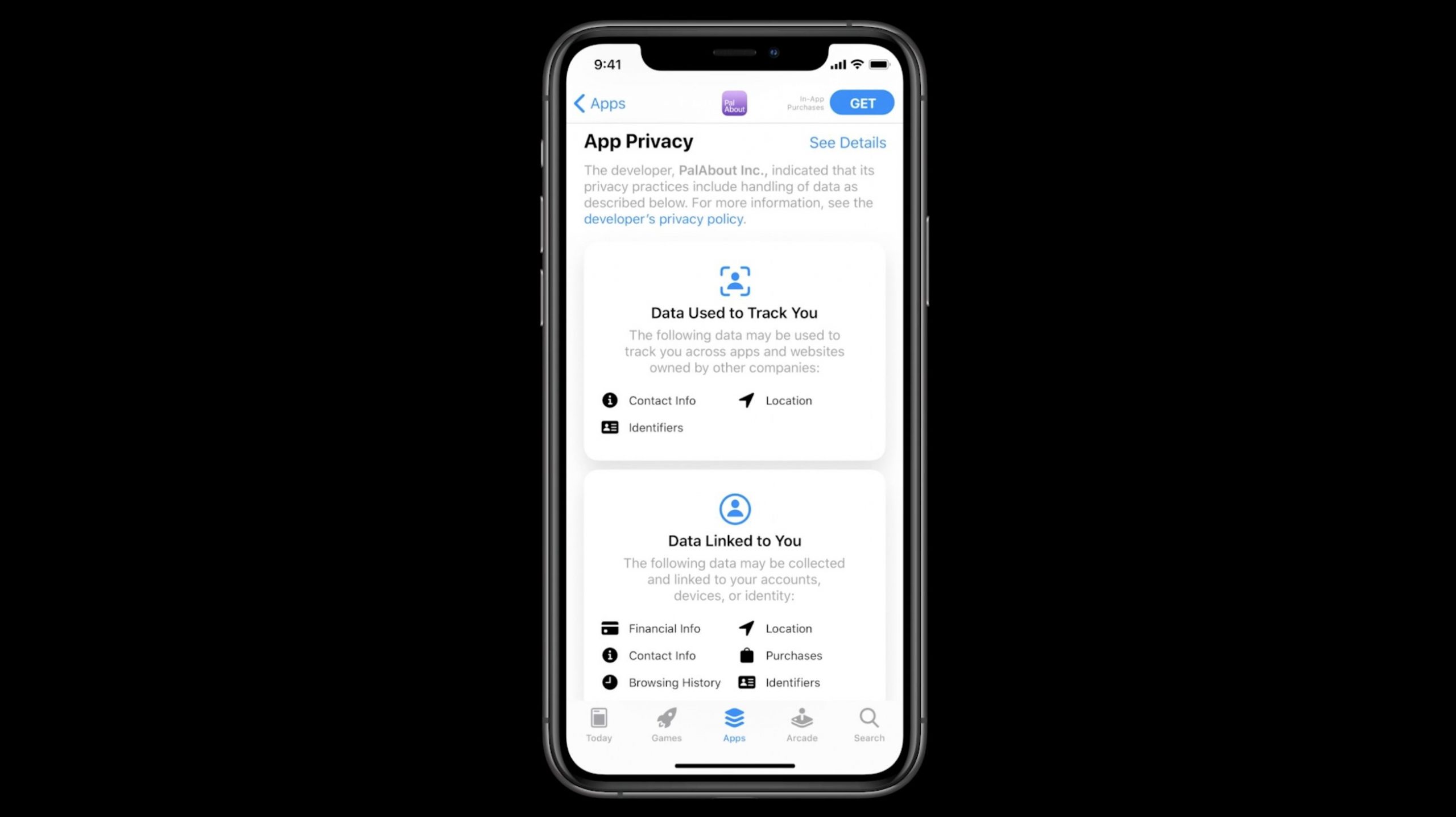

Increased User Privacy

Apps on IOS 14 will be required to ask more user permissions. Developers should not see this in a negative way, this will only increase the trust of the users of their iOS 14 apps in a good way. If you require to track a user’s behavior outside your app, you would have to ask for the user’s consent. Also, any process like data copying, etc. will be highlighted to the user in the form of notification banners to the user. When developing for iOS 14, please ensure you factor in all these during development. If you are a Product owner working with a developer, please ensure you share these guidelines with the iOS 14 developer.

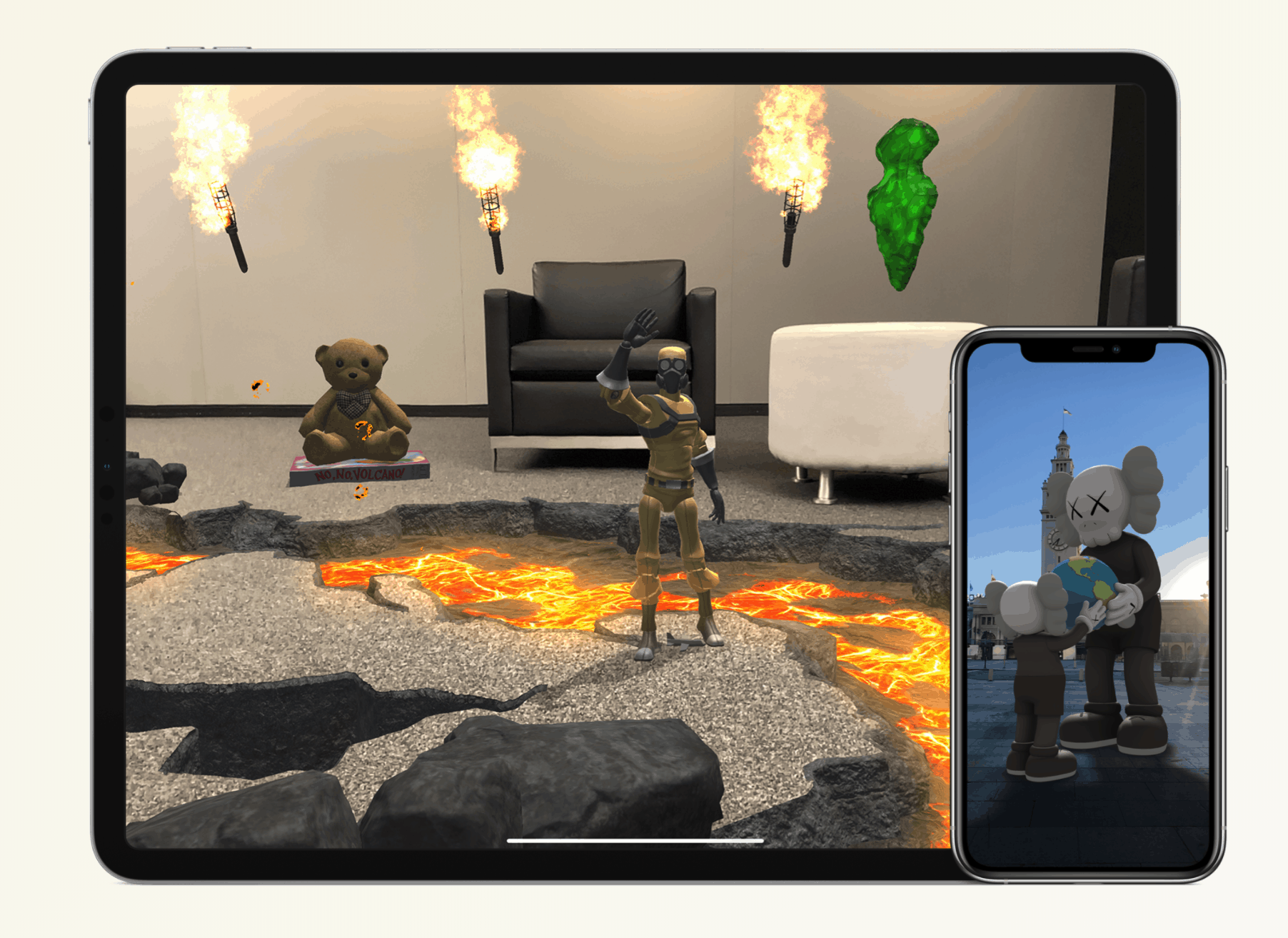

ARkit 4

The new ARKit 4 Beta brings along Depth APIs which can be a game-changer in the way image and video recognition are done on the device. With real-time tracking models, you can combine AR and ML to track a human body in a video in real-time. The new AR creation tools like Reality composer are making it easier for new developers to jump on the Augmented Reality bandwagon.

Get in Touch

Get in Touch